By Elizabeth Titus | Tue, May 23, 17

Have you ever made a New Year’s resolution? You know, you’ve wanted to do something like eat healthier, become the next “Biggest Loser”, or lift increasing amounts of weight in your workout. You do well at the outset; powered by excitement and engagement. After several months, though, you discover that your willpower starts to fade. This is, of course, one of the main challenges of continuous improvement efforts – despite knowing that sticking to our plan can make a big difference over time, we get lost in the daily steps while losing sight of the end goal. Sometimes, we need a plan to keep us on track.

We can examine our energy-using habits the same way, especially if those habits are in the industrial and commercial sector – typically, the largest energy users. Strategic Energy Management (SEM), then, is that plan to keep us on track. SEM is a clever program design that engages large and medium industrial and commercial customers in energy efficiency with a focus on comprehensive and continuous improvement. So, think of Strategic Energy Management as a weight-lifting program run by utilities for some of their “heavier” customers.

How does it work? The utility starts by assessing the facility (modeling their energy consumption) and then works with the top management on down to identify opportunities for savings (a training program for key company staff). Along the way, the utility provides coaching. All the while the company and the utility track their activity.

How can you tell if the program is working – or if it’s worthwhile? This comes within the domain of program evaluation, measurement and verification (EM&V). EM&V is the equivalent of fitness check-ins meant to assess the combined effect of all the weight training and healthy habits. These check-ins monitor whether the changes are lasting over time or not. Evaluating results of SEM, while similar, is a little more challenging than hopping on the gym scale.

NEEP has been working with the US DOE and stakeholders in the region to help communicate the status of SEM, and has summarized some findings in a recent paper. The paper reviews available approaches to evaluate SEM, the range of results, the types of benefits associated with these programs, challenges associated with evaluation, and recommendations intended to help utilities and regulators assist industry and other businesses take advantage of the SEM program design.

Program Experience

As of 2016, SEM programs provided by energy efficiency programs have served 707 industrial sites overall. In 2015, 10 programs provided services to 287 facilities. Most of the uptake so far is in the Northwest, although the Midwest, California, Canada, Vermont, and New York are building experience with SEM. The savings come from a mix of capital investment energy efficiency measures and operations and maintenance and behavioral (OMB) changes. Some SEM programs claim all of these combined, while some ascribe OMB only to SEM, and some SEM programs serve as a pipeline to other utility capital investment programs.

Protocols to Guide Measurement and Evaluation

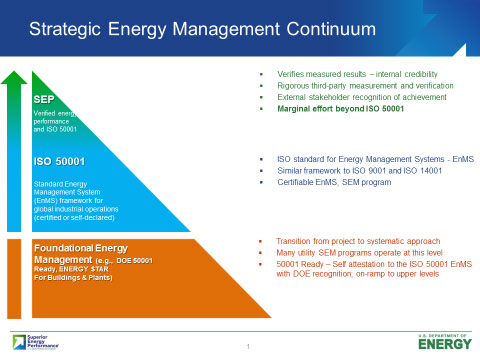

Several such tools – focusing primarily on the whole facility or building level – are available. These include International Performance Measurement and Verification Protocol (IPMVP), ISO 50001, standards and protocols for Superior Energy Performance, and hot off the press, a Uniform Method Protocol from DOE.

Areas where more guidance would help: interactive effects between OMB and capital investment programs, treatment of baselines and persistence when disaggregating OMB from capital investment savings, assessing impacts from the implementation of multiyear program, and potentially, guidance on quantifying significant non-energy benefits.

Evaluation Measurement and Verification Experience

Evaluation Measurement and Verification Experience

Results can inform early program feedback, be a basis for performance contract payments, or be used to claim savings for SEM programs. More program experience is needed to refine approaches to improve accuracy in measurement and the ability to measure diverse program applications. Strategies that reduce evaluation costs – such as scaling up, homogenous cohorts, EMIS, improved tracking – are being explored.

Negative savings are found in some facilities and need to be included in results to avoid bias. Multi-year impacts and changes over time should be captured. Long-term impacts (measure life) is not yet understood. Standardized reporting of results would help the industry, including transparency to distinguish types of savings and programs (OMB vs capital investment). No protocols and little attention in the evaluation literature has been paid to demand impacts, gas program impacts and quantification of non-energy benefits.

M&V 2.0

SEM program evaluation approaches already bear similarities to “M&V2.0” (use of advanced software analytics in conjunction with AMI or other metering data) and the benefits of this approach are that it could speed up feedback on continuous improvement and potentially reduce evaluation costs. For some utilities with high penetration of smart meters, the analysis could be carried out for all efficiency projects, avoiding problems associated with sampling in program evaluation. However, it is important to note that multi-year assessment of SEM program impacts would still be necessary in order to assess persistence of impacts. A number the challenges associated with the current whole building regression-based SEM program evaluation approach also exist for M&V2.0, such as treatment of baselines, need to apply non-routine adjustments, and explanatory variables beyond weather, for example associated with production.[1]

Non-Energy Impacts

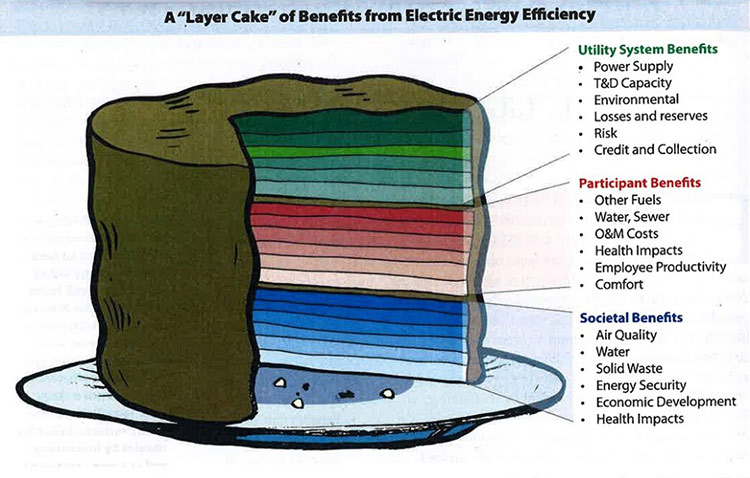

SEM programs have a variety of non-energy impacts (benefits) of value to society, utilities or participating customers. Estimation of non-energy impacts, however, is limited or not commonly included as part of SEM program evaluation. Evaluators often examine production efficiency as a variable in regression models and should communicate this as an economic benefit of the SEM program. Customers’ activity logs and other tracking of non-energy impacts should be incorporated in formal data collection efforts and templates to enable evaluators to document and/or estimate these for various purposes, including marketing the program and as inputs to cost-effectiveness analysis. Evaluation methods used to estimate non-energy impacts from other commercial and industrial energy efficiency programs, such as self-report surveys combined with engineering information, should be explored for transferability to SEM programs.

As part of national protocol development in support of SEM, consideration should be given to developing a web-based tool and database focused on the industrial sector that includes the following elements: a method for assessing non-energy impacts of energy efficiency projects, a non-energy impact database that allows users to search by project type, case studies with details, and a questionnaire to assist utilities with identification and assessment of non-energy impacts.

Cost-Effectiveness

The Total Resource Cost Test widely prevails as the tool used to assess cost-effectiveness of SEM programs; this test considers participant as well as program administrator costs and benefits. The US DOE’s Superior Energy Performance program provides templates and tools to assist utilities in categorizing and applying the TRC test for SEM programs. Utilities should apply the TRC test, symmetrically, quantifying both costs and benefits of any element in the test, to reduce or avoid bias in the test.

Program administrators are more likely to focus their attention on how to reduce costs or report cumulative savings over multiple years, before they turn to comprehensive evaluation of non-energy benefits. This practice, however, does not help customers or the industry fully appreciate the fact that non-energy benefits are not equal to zero.

New cost-effectiveness guidance in the form of a National Standard Practice Manual is being prepared by the Home Performance Coalition and is scheduled to be released in May. It reinforces the importance of unbiased and inclusive cost-effectiveness testing, and it suggests that all benefits that are aligned with a state’s energy policy merit consideration.

We know that if we want our habit-changing resolutions to stick, we need a road map that includes a clear step-by-step plan, a way to track and measure small steps of success, and accountability to be sure that our steps are working. Thankfully, if we’re interested in changing our energy-use habits, the SEM program design is compelling. While more work on evaluation issues remains to be done, the experience to date and the tools that are becoming available help to tell the full story.

[1] SBW Consulting, Inc., January 19, 2017, Evaluation Strategies for the Site-Specific Savings Portfolio. Available at: www.bps.gov/goto/evaluaiton.